AI Governance and Responsible Use Policy

Effective from: 1st January 2026

1. Purpose

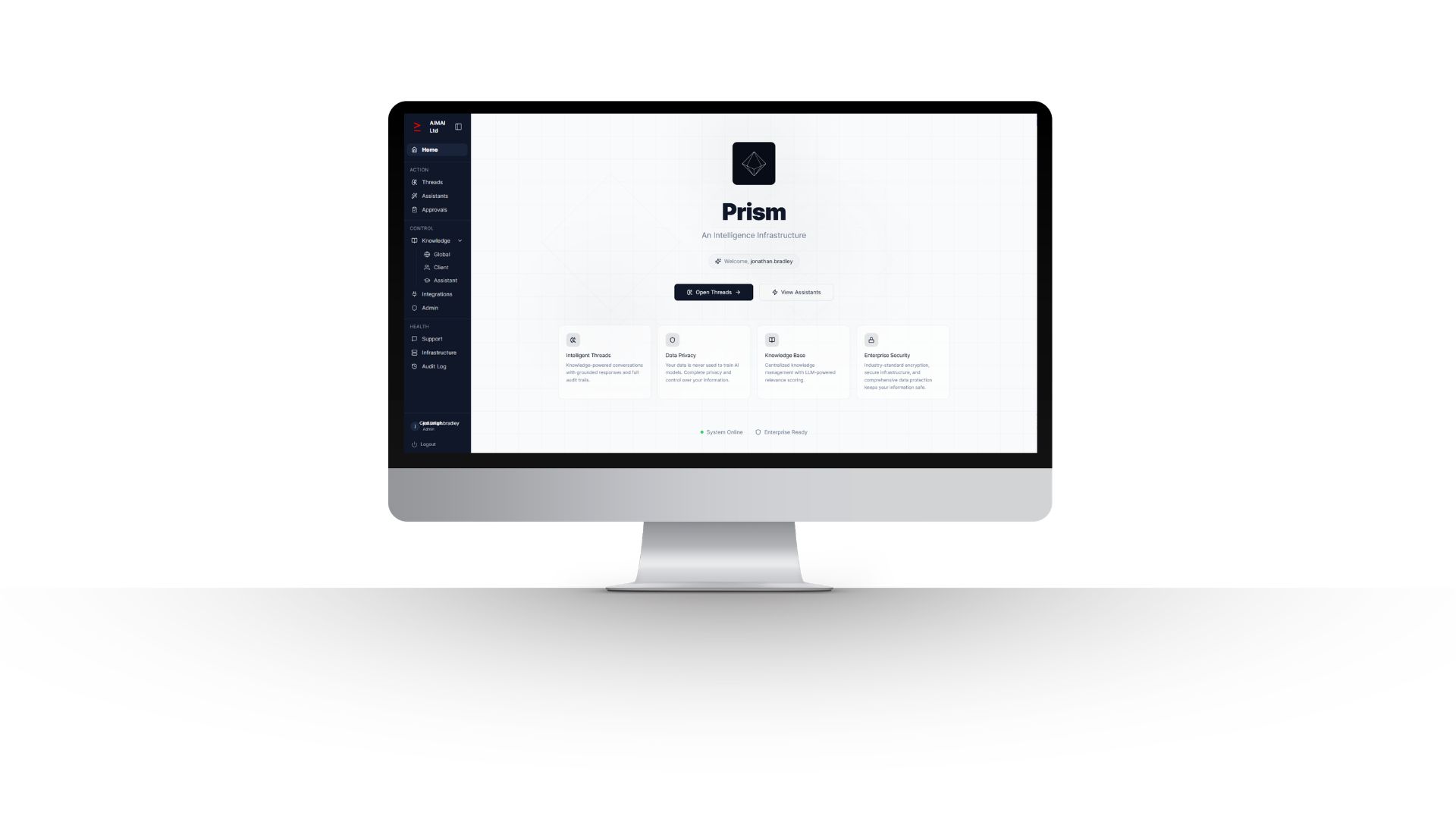

The purpose of this policy is to define how AIMAI Ltd governs the design, deployment, operation, use, monitoring, and continuous improvement of artificial intelligence across PRISM and related services. This policy exists to ensure that AI is used lawfully, responsibly, securely, and in a way that supports consistent operational outcomes while reducing avoidable risk.

AIMAI’s position is that AI should not operate as an unmanaged or ad hoc tool. AI use must be bounded by policy, governed by approved knowledge, supported by human oversight where required, and operated within clear technical, commercial, and accountability structures. Governance is not treated as a blocker to adoption; it is part of how AI becomes usable at scale.

2. Scope

This policy applies to AIMAI Ltd personnel, contractors, authorised client users, client organisations, and approved third parties who access or use PRISM or related AIMAI services. It applies across the full governed AI environment, including but not limited to:

- PRISM workspaces and client environments

- Threads and client-level threads

- Knowledge Base and client-level knowledge

- Approvals and knowledge publishing workflows

- Audit records and activity logs

- Custom assistants and workflow-specific AI implementations

- Integrations with third-party systems

- Platform administration, permissions, and access controls

This policy applies whether AI output is used internally by AIMAI, by client teams, or in shared delivery contexts involving clients, partners, or other approved users.

3. Governance Principles

- Lawful and authorised use: AI must only be used for legitimate, authorised, and lawful business purposes.

- Human accountability: Responsibility for decisions remains with people, not models or automated outputs.

- Knowledge-grounded operation: AI should rely on approved and governed knowledge rather than uncontrolled or inconsistent inputs.

- Least-privilege access: Access to systems, data, workspaces, and governance functions must be limited to what is necessary.

- Transparency and auditability: Use of AI within PRISM should be capable of review through records, context, and audit functionality.

- Security by design: AI use must operate within AIMAI’s security, access control, logging, and infrastructure standards.

- Continuous improvement: Policies, prompts, knowledge structures, workflows, and controls must evolve as usage, risks, and technology change.

4. Platform Model and Governance Context

PRISM is AIMAI’s governed AI platform for deploying and operating AI assistants and workflow intelligence in a controlled environment. The platform is designed to support safer AI adoption through dedicated infrastructure, governance content, shared workspaces, approved knowledge structures, and audit-supporting functionality.

The platform model is based on structured rather than ad hoc AI use. Knowledge Base acts as a central source of truth, Approvals supports controlled publishing of knowledge, Threads keeps work visible and repeatable, client-level knowledge and threads maintain contextual separation, and Audit supports review of how AI has been used and what context was relied upon at the time.

AIMAI continuously evaluates emerging AI and large language model capabilities. Additional providers or models may be adopted over time where this improves outcomes, capability, or resilience. Any new relevant sub-processors will be disclosed accordingly.

5. Roles and Responsibilities

- Policy Owner: Owns this policy, approves material changes, and is accountable for overall governance direction.

- AIMAI Leadership: Oversees governance posture, risk tolerance, and alignment between platform capability, client commitments, and policy standards.

- Platform Administrators: Manage access, permissions, configuration, controls, and administrative safeguards.

- Knowledge Approvers: Review and approve knowledge before it becomes relied upon as governed context.

- Users: Must use PRISM and related AI tools responsibly, within authorisation, and with appropriate review of outputs.

- Clients and Client Administrators: Are responsible for ensuring their users operate within agreed controls, approved purposes, and their own internal obligations.

- Third Parties and Integration Partners: May only access or connect to the platform where approved and governed under appropriate commercial and technical arrangements.

6. Permitted and Expected Use

Use of AI through PRISM is permitted where it supports authorised business activity and remains within approved controls. Permitted use includes:

- drafting, summarising, analysing, and supporting business workflows

- working within shared or client-level threads grounded in approved context

- using approved knowledge to improve consistency, speed, and operational quality

- supporting decision-making, where a responsible person remains accountable

- using custom assistants and integrations for agreed workflow purposes

- maintaining and improving governed knowledge and policy content through approved processes

Users are expected to exercise judgement, check outputs where appropriate, and escalate any risk, uncertainty, weakness, or misuse.

7. Prohibited Use

The following activities are prohibited:

- using PRISM or related services for unlawful, fraudulent, harmful, abusive, or deceptive purposes

- attempting unauthorised access to systems, data, accounts, workspaces, integrations, or administrative functions

- probing, testing, scanning, reverse engineering, disrupting, or attempting to compromise the platform without written authorisation

- uploading, processing, or sharing content that the user is not authorised to use

- introducing malware, malicious code, or activity intended to impair service availability, confidentiality, or integrity

- using AI outputs as a substitute for required human decision-making in operational, legal, regulatory, financial, or client-sensitive matters

- circumventing governance, approvals, permissions, or audit controls

- using personal, confidential, regulated, or sensitive data in a way that is unauthorised, unnecessary, or inconsistent with applicable obligations

- presenting AI-generated output as verified fact where it has not been checked appropriately

8. Human Oversight and Decision Accountability

AI may assist with analysis, drafting, recommendation, classification, synthesis, and workflow support, but responsibility for decisions remains with accountable people. Human review is mandatory where outputs may influence legal, regulatory, financial, contractual, employment, client, safety, or other materially significant outcomes.

Users must review outputs in proportion to the risk and impact of the task. Higher-impact use requires stronger review. A user must not rely on AI output without suitable verification where there is any meaningful consequence if the output is wrong, incomplete, misleading, outdated, or not grounded in the correct context.

9. Knowledge Governance

AIMAI’s governance model relies on controlled knowledge, not unmanaged prompt inputs. PRISM’s Knowledge Base is intended to act as a reliable source of approved business context. Knowledge submitted into the system may be proposed by a broad range of contributors, but where approvals are in place, only authorised approvers may publish or validate knowledge for operational reliance.

The objectives of knowledge governance are to:

- reduce drift, inconsistency, and duplication

- ensure important business, client, regulatory, or procedural context is captured centrally

- keep outputs aligned to the organisation’s intended standards

- preserve knowledge within the business rather than leaving it fragmented across private tools or individuals

- create a compounding improvement loop as operational learning is captured over time

Client-level knowledge must remain appropriately separated and only accessible to authorised users with a legitimate need.

10. Data Protection and Information Handling

AI use through PRISM must align with AIMAI’s privacy and information handling obligations. AIMAI may act as a controller or processor depending on the service context. Personal data, business data, client content, account information, usage data, and integrated third-party data must only be collected, processed, stored, and used where there is a lawful and operational basis to do so.

- Users must only input data they are authorised to use.

- Only the minimum data reasonably required for the task should be used.

- Special-category, regulated, or otherwise sensitive data must not be processed without appropriate authority, necessity, and safeguards.

- Client data and workspace content must be handled in line with access controls, contractual requirements, and relevant legal obligations.

- International data transfers must be managed using appropriate transfer mechanisms, including the UK International Data Transfer Agreement or the UK Addendum to the EU Standard Contractual Clauses where applicable.

11. Security and Access Control

AIMAI operates PRISM and related services within a controlled security model. Hosting, storage, monitoring, and core service operation are delivered primarily on AWS infrastructure, including Amazon EC2, RDS, S3, SES, SNS, and CloudWatch. SSL/TLS protection is supported through Let’s Encrypt, DNS is managed through Fasthosts, and source code hosting and deployment workflows are managed through GitHub.

Everything else, including user management, logging, workflow engine operation, and API-layer functionality, is developed and operated in-house on AWS infrastructure.

The following controls apply:

- unique user accounts and credential protection

- least-privilege permissions and access segregation

- prompt deprovisioning when access is no longer required

- periodic access review

- secure transmission of data using SSL/TLS

- monitoring, logging, and operational review

- environment and client separation where applicable

- incident escalation and response procedures

12. AI Providers, Infrastructure Providers, and Sub-processors

PRISM and related AIMAI services may rely on third-party providers to deliver infrastructure and AI capability. Current providers include:

- Amazon Web Services (AWS): EC2, RDS, S3, SES, SNS, CloudWatch for hosting, database, storage, email, SMS, and monitoring

- OpenAI: general large language model processing

- Anthropic: large language model processing

- Google: Imagen image generation

- Deepgram: audio transcription and speech processing

- GitHub: source code hosting and deployment

- Fasthosts: DNS management

- Let’s Encrypt: SSL certificate issuance

- Stripe: payment processing, planned

Third-party connectors, including Sage 50, CRM systems, accounting platforms, and similar services, may be integrated on a client-by-client basis and will be disclosed as applicable for the relevant engagement.

AIMAI may adopt additional AI providers, models, or supporting services over time. Any such changes will be assessed through AIMAI’s governance and security processes and disclosed where relevant.

13. Monitoring, Logging, and Audit

AIMAI monitors platform usage and maintains logs and audit-supporting records to support service integrity, troubleshooting, governance oversight, misuse investigation, and continuous improvement. PRISM’s audit-oriented capabilities are intended to make it easier to review what was asked, what was produced, and what context or approved knowledge was relied upon at the time.

Monitoring and audit records may be used to:

- review user activity and access patterns

- investigate incidents, misuse, or suspected breaches

- support governance assurance and client confidence

- improve prompts, workflows, permissions, and controls

- evidence responsible use and operational accountability

14. Quality, Fairness, and Reliability

AI outputs can be useful, but they are not inherently correct, complete, unbiased, or suitable for every context. AIMAI therefore requires a controlled approach to quality and fairness.

- Outputs should be grounded in relevant approved context wherever possible.

- Users must challenge outputs that appear incorrect, overconfident, inconsistent, or unsupported.

- Important workflows should be reviewed and iterated based on actual usage and outcomes.

- Where a workflow may create bias, inconsistency, or adverse impact, additional review and control should be applied.

- AI should support better judgement and consistency, not replace responsibility.

15. Incident Management and Breach Reporting

Any suspected or actual misuse, breach, unauthorised access, data incident, policy violation, or material AI failure must be reported without delay through the appropriate AIMAI support or governance route. Incidents must be assessed, contained, investigated, and remediated in line with AIMAI’s operational and security processes.

Examples of reportable incidents include:

- suspected account compromise or credential exposure

- unauthorised access to client or organisational data

- materially incorrect AI output causing or likely to cause significant impact

- breach of confidentiality or improper information sharing

- use of unauthorised integrations, models, or data sources

- attempts to bypass controls, approvals, or permissions

16. Training and Awareness

AIMAI will maintain this policy as part of a broader responsible use and governance framework. Relevant personnel should be made aware of their responsibilities, including appropriate use of AI, secure handling of information, reliance on approved knowledge, access discipline, and the need for human review in higher-risk contexts.

Where PRISM is deployed for client organisations, AIMAI may support onboarding and governance familiarisation as part of implementation and operational rollout.

17. Enforcement

Breaches of this policy may result in action proportionate to the severity and nature of the issue. This may include suspension of access, restriction of permissions, removal of content, required remediation steps, contractual action, escalation to client administrators, or referral to regulators or law enforcement where appropriate.

AIMAI reserves the right to intervene where platform integrity, client safety, legal compliance, security posture, or responsible use standards are at risk.

18. Review and Maintenance

This is a living policy. AIMAI will review and update it periodically and whenever there is a material change to the business, the PRISM platform, the AI provider landscape, legal or regulatory obligations, information security posture, or the overall risk profile.

Review activity may also be triggered by operational learning, incidents, major product changes, supplier changes, or evolving best practice in AI governance and responsible use.

19. Contact

AIMAI Ltd

Office 18, The Globe Innovation Centre

Slaithwaite

HD7 5JN

Telephone: 01484 767892

Email: info@aimai.co.uk